Exus Blog Article

Explaining AI Decisions Without Exposing Models: A Collections-Grade Approach to Transparency

The debt collections industry has crossed a threshold. Between 2024 and 2025, the adoption of AI by collection agencies surged from 73% to 93% [1]. AI is no longer an experimental pilot program; it is the standard infrastructure driving how financial institutions interact with customers, assess risk, and execute recovery strategies at scale.

The business case driving this adoption is undeniably proven. Financial institutions deploying AI in collections are reporting recovery rate improvements of 25% to 40% over traditional methods, alongside operational cost reductions of up to 60% [2] [3]. Yet this rapid adoption has created a growing tension in boardrooms and compliance teams alike. Financial institutions desperately want the predictive power and margin improvements of AI, but regulators, auditors, and consumers rightfully demand explainability.

How can financial institutions build trust in AI-driven decisions without exposing the proprietary mathematical models behind them?

At EXUS, we reject the opaque, "black box" approach entirely.

We engineer a system-level, collections-grade framework for transparency. Financial institutions do not need to publish the raw weights and biases of a neural network to prove it operates fairly. They need rigorous explainability at the point of decision, continuous monitoring across the model lifecycle, and comprehensive observability for every AI-generated action.

Explainability goes beyond mere compliance. As Michael S. Barr, Vice Chair for Supervision at the Federal Reserve, noted:

"Every financial institution should recognize the limitations of the technology, explore where and when GenAI belongs in any process, and identify how humans can be best positioned to be in the loop... frameworks for understanding model risk may need to be updated to address the complexity and challenges of explaining AI methods."

This article explores how financial institutions can deploy AI that is both highly effective and completely transparent.

The Governance Gap: Why Opaque AI Fails

Many organizations fall into a predictable trap. They adopt off-the-shelf, opaque AI tools that provide a short-term boost in recovery rates. While the models can provide encouraging early results, when a compliance team asks why a specific vulnerable customer was contacted seven times in a week, or why a particular demographic consistently receives less favorable settlement terms, the system offers no answers.

This lack of governance carries real consequences. A joint 2024 survey by the Bank of England and the UK Financial Conduct Authority (FCA) revealed a startling disconnect: while 75% of UK financial services firms are now using AI, only 34% say they have a "complete understanding" of how their AI systems actually work [2].

Operating an automated system without understanding its logic is a profound operational risk. Governance is not the enemy of AI performance, it is what makes AI deployment sustainable in regulated financial environments. Every communication sent to a customer must trace back to a specific rationale, grounded in a transparent behavioral science framework.

The Global Regulatory Reality

Regulatory scrutiny around AI in financial services and credit decisioning is no longer regional, it is becoming a synchronized global movement:

- In Europe, the EU AI Act classifies AI systems used "to evaluate the creditworthiness of natural persons or establish their credit score" as "High-Risk" [3]. This triggers mandatory obligations: transparent design, robust human oversight mechanisms, detailed technical documentation, and comprehensive audit logging.

- In the United States, the Consumer Financial Protection Bureau (CFPB) establishes clear boundaries regarding explainability. The Bureau states directly that creditors must specifically explain their reasons for adverse actions. Director Rohit Chopra has been explicit:

"Creditors must be able to specifically explain their reasons for denial. There is no special exemption for artificial intelligence." [4]

- In Southeast Asia, regulators are moving just as decisively. In September 2025, the Bank of Thailand (BOT) issued comprehensive AI Risk Management Guidelines for financial service providers, mandating strict explainability of AI outcomes, clear evaluation metrics for model accuracy, and specific measures to reduce AI hallucination risks [5]. Similarly, the Bangko Sentral ng Pilipinas (BSP) is establishing rules centered on ethical AI deployment, the management of algorithmic bias, and the requirement for clear evidence that AI outputs have adequate support [6].

Across all these jurisdictions, the message is identical: algorithmic complexity is never an excuse for failing to explain a decision.

Decoding the Two Sides of AI in Collections

To build an effective transparency framework, we must recognize that AI in collections consists of two distinct technologies. Each possesses different architectures, different failure modes and different explainability requirements.

|

Dimension |

Predictive ML |

Generative AI |

|

Primary Function |

Risk scoring, segmentation, next-best-action |

Conversational negotiation, agent assistance |

|

Key Risk |

Bias, drift, opaque feature weighting |

Hallucination, policy violation, tone failure |

|

Explainability Method |

Feature importance algorithms (XAI) |

Agentic observability, tracing, evaluation |

|

Monitoring Approach |

Statistical stability tracking |

Telemetry logging, compliance scoring |

For example, a bank deploying a predictive model to score accounts requires algorithmic explainability to satisfy model validation requirements. That same bank deploying an agentic-AI powered chatbot requires conversational tracing to satisfy consumer protection rules. These are different problems requiring different tools, integrated into a coherent governance framework.

Achieving Transparency in AI-Driven Debt Collections: Four Pillars of Explainable AI

At EXUS, we build a transparency-first framework on four interconnected pillars, ensuring that strategy executes itself safely, transparently and measurably.

Pillar 1: Full-Lifecycle Quality and Discrimination Metrics

Transparency begins with proving that a ML model is accurate, stable, and fair before it ever makes a decision. Our AI Decision Engine employs a comprehensive metrics framework to track model quality and prevent discrimination.

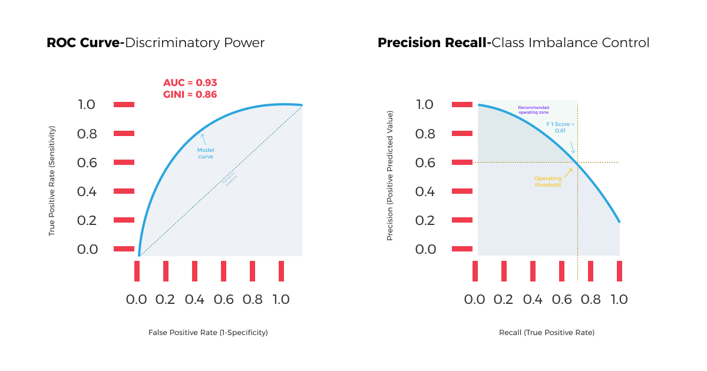

- To measure predictive power and handle class imbalance, we track ROC/AUC (Receiver Operating Characteristic / Area Under the Curve) and Gini coefficients, which prove how well the model distinguishes between different customer outcomes (e.g., likely to pay vs. likely to default). We also monitor Precision, Recall, and F1 scores to ensure the model does not over-penalize specific segments when dealing with imbalanced datasets.

- To prove fairness, we conduct stability analysis across cohorts using the Kolmogorov-Smirnov (KS) statistic. The KS test measures the maximum distance between the cumulative distributions of different groups. If the KS statistic shows that a model treats a protected demographic fundamentally differently than the baseline population, the model is flagged for bias review before deployment.

Pillar 2: Explainable Artificial Intelligence (XAI)

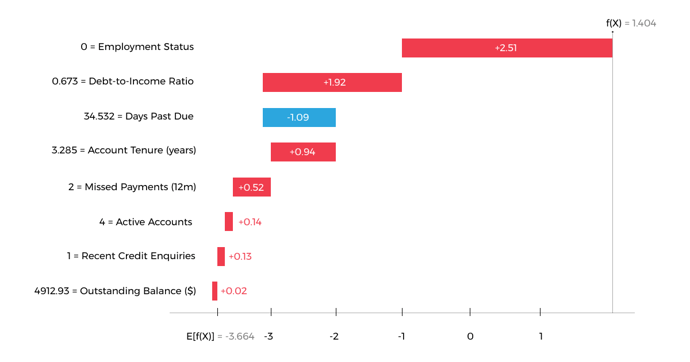

Explainable AI (XAI) refers to techniques that make machine learning decisions understandable to humans and that’s a critical capability for regulated industries such as banking and debt collections. For our predictive models, we utilize mathematical methods like SHAP (SHapley Additive Explanations) to provide two distinct types of explainability:

- Global Explainability: This answers the question, “How does this model work overall?” It shows the aggregate feature importance across the entire portfolio. For example, a global view might prove to an auditor that, across one million accounts, "recent payment history" carries a 40% weight, while "debt-to-income ratio" carries a 25% weight.

- Local Explainability: This answers the question, “Why did the model make this specific decision for this specific customer?” SHAP calculates exactly how much each piece of customer data moved a single score up or down.

Consider a collections risk model that assigns a high priority score to a specific account. Without XAI, the system simply outputs "Score: 85." With local explainability, the system outputs a detailed breakdown: the score increased primarily because the customer missed a payment three months ago, though this risk was slightly offset because they have held the account for seven years. This mechanism allows banks to generate compliant adverse action notices derived directly from the mathematics of the model.

Pillar 3: Proactive Drift Management

An AI model is only as accurate as the data it encounters in production. Over time, macroeconomic shifts, inflation, or changes in consumer behavior cause model drifts; degradations in performance that lead to inaccurate decisions.

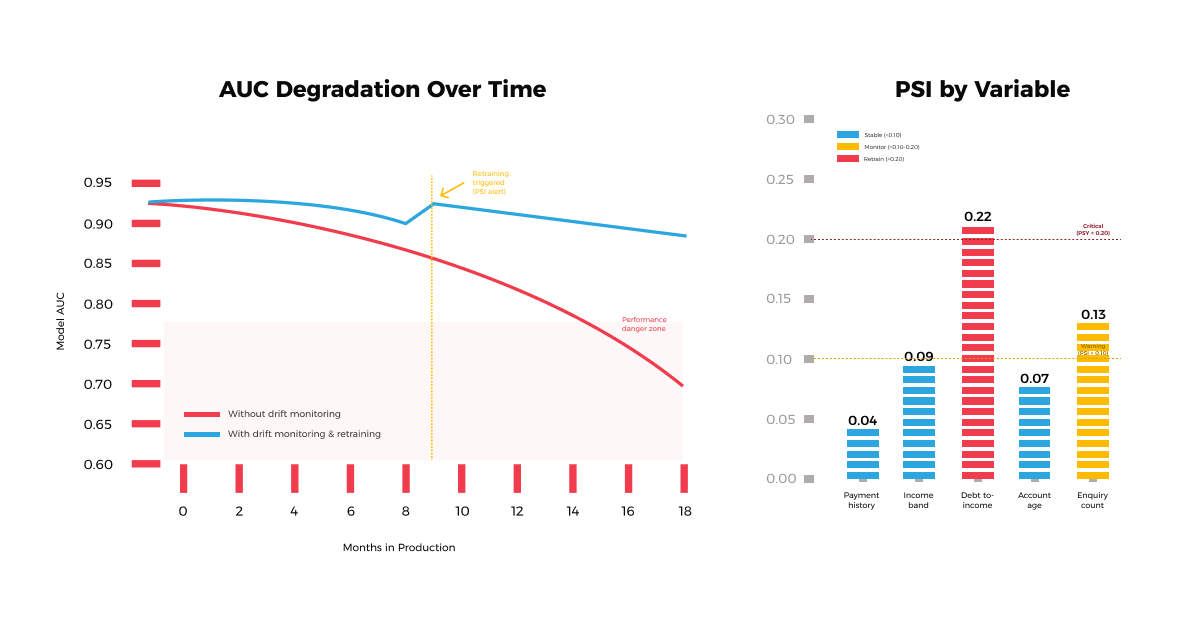

We treat drift management as a core operational discipline, monitoring for two distinct types of drift:

- Concept Drift: When the fundamental relationship between variables changes (e.g., a high income no longer guarantees repayment due to sudden inflation).

- Data Drift: When the distribution of incoming data changes (e.g., a sudden influx of younger borrowers entering the portfolio).

Our systems continuously monitor statistical metrics, like the Population Stability Index (PSI), to detect these shifts. PSI measures how much a population's distribution has shifted over time. If the PSI of a key variable crosses a critical threshold, the system triggers an alert. Retraining pipelines initiate, evaluation gates assess the new model candidate and a safe rollout is orchestrated.

Pillar 4: Agentic AI Observability

The introduction of GenAI and Agentic AI into collections represents a significant governance challenge. When an AI conducts live negotiations with real customers or actively coaches human agents, institutions require real-time, comprehensive observability. Standard logging is insufficient; these systems require deep telemetry.

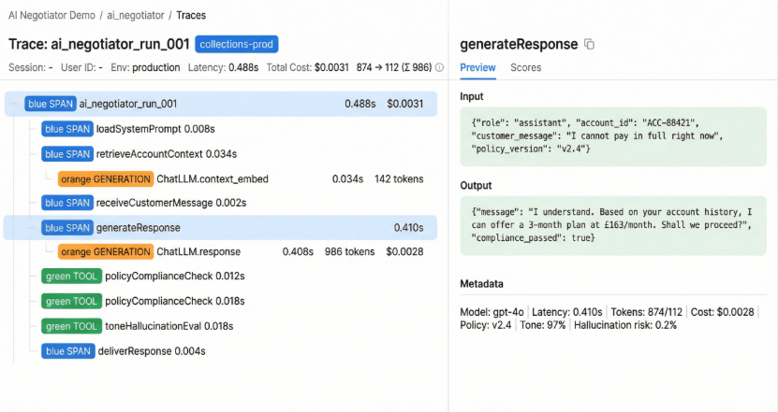

Our GenAI offerings (our customer-facing, AI Negotiator and our collection agent’s assistant, AI Coach) leverage advanced agentic observability out-of-the-box. This means we do not just log the final output; we capture the entire "chain of thought."

- Tracing: Every interaction is recorded as a "trace," broken down into individual "spans." If the AI Negotiator offers a settlement plan, the system logs the exact prompt sent to the Large Language Model (LLM), the context retrieved from the database, the specific policy rules applied and the latency of the response.

- Hallucination Detection and Jailbreak Protection: We employ automated evaluation metrics to continuously score the LLM's outputs against predefined rubrics. We also integrate prompt shields to monitor and evaluate user inputs in real-time. If a user prompt is identified as potentially harmful or designed to exploit the AI, the system intervenes by blocking the response or redirecting the query to a human agent.

- Cost and Quality Monitoring: The observability layer tracks token usage and computational cost per conversation, ensuring that the AI remains not just compliant, but economically viable.

Pillar 4 - Agentic AI Trace: Every step of a chatbot conversation turn, logged in real time. The span tree on the left shows the full execution chain from loading the compliance policy to generating the response and passing the policy check with exact latency for each step. The panel on the right shows the full input, output and evaluation scores for the selected span.

Crucially, this technical tracing is governed by a strict RACI framework (Responsible, Accountable, Consulted, Informed). Combining deep technical observability with human accountability, enables a "policy bounded" environment. Our GenAI solutions have the flexibility to converse naturally, but they operate within a governance structure that prevents it from executing an action that violates institutional constraints.

The Future is Governed

The organizations that will thrive in this new operating reality are those that treat AI as governed production infrastructure.

When a financial institution clearly explains its AI decisions (both globally and locally), monitors its models for drift using rigorous statistical metrics, and traces every automated conversation through advanced observability frameworks, it satisfies global regulators and builds trust with consumers.

At EXUS, we instrument every decision and every action. We design for explainability from the ground up. The result is AI that is highly effective, demonstrably fair and completely transparent.

We do not need to expose our models to prove they work. We prove they are governed.

If you are exploring how to deploy explainable, governed AI in collections, contact an EXUS expert to discuss how transparency frameworks can be implemented in practice.

The author would like to thank Konstantinos Kentrotis, Kashyap Raiyani, Davide Mastricci, and Panagiotis Tassias from the AI team for their valuable technical contributions to this article.

References

[2] Frenzo Financial Services. (2026). AI Debt Collection Platform: Increase Recovery Rates by 40%.

[3] Kompato AI. (2026). The Future of Debt Collection with AI: A 2026-2027 Forecast.

[5] Financial Conduct Authority / Bank of England. (2024). Research Note: AI in UK financial services.

[6] European Union. (2024). The EU Artificial Intelligence Act — Annex III: High-Risk AI Systems.